Introduction

Artificial intelligence systems have traditionally focused on analyzing language, images, and structured data with high accuracy. However, most early-generation AI technologies largely ignored a crucial aspect of human communication: emotion. In real-world interactions, tone, facial expressions, and subtle changes in voice often carry as much meaning as the words themselves. As digital platforms increasingly mediate conversations—through customer service tools, voice assistants, and interactive applications—the inability of machines to interpret emotional context has become a notable limitation.

This gap has contributed to the development of a specialized category of technology often referred to as emotion-aware AI or affective computing. These systems attempt to interpret emotional signals from human inputs such as speech patterns, facial expressions, and text sentiment. The goal is not to replicate human emotion but to recognize emotional cues that may influence communication.

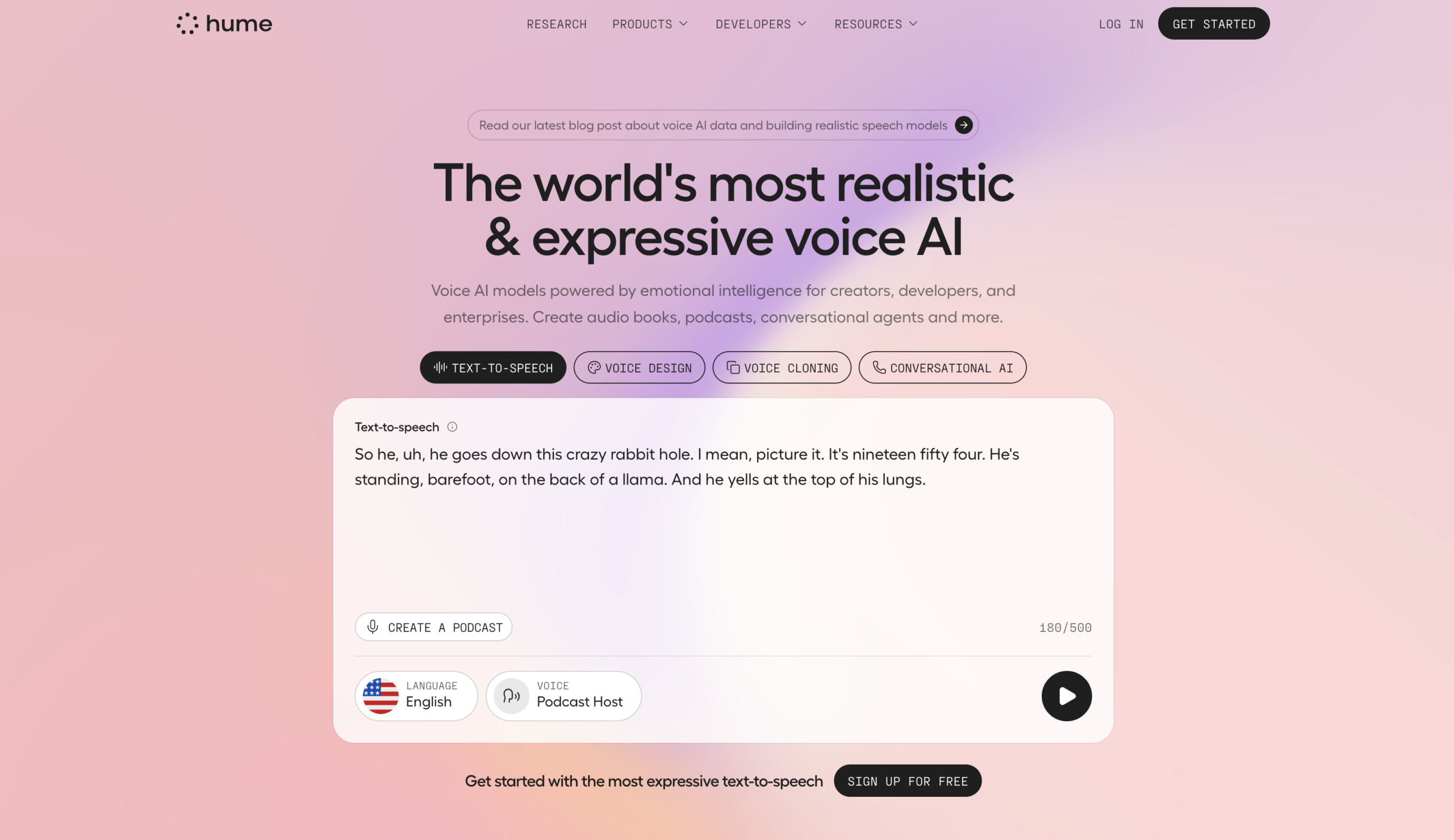

Within this emerging field, Hume AI represents one example of a platform designed to analyze emotional signals using machine learning models. The system focuses on detecting emotional information embedded in speech, facial movements, and other expressive behaviors. Rather than acting solely as a conversational AI, it operates primarily as a research and developer-oriented tool for building emotionally responsive applications.

Understanding how tools like Hume AI function requires examining their technical foundations, practical uses, and the broader context in which emotion-recognition technologies are being developed.

What Is Hume AI?

Hume AI is an artificial intelligence platform focused on emotion recognition and affective signal analysis. The system analyzes various forms of human expression—including voice, facial movement, and language—to identify emotional patterns using machine learning models.

The platform belongs to the broader discipline of affective computing, a field that combines computer science, psychology, linguistics, and neuroscience. Affective computing systems aim to interpret emotional information that humans communicate both intentionally and unintentionally.

Hume AI processes expressive data through specialized models trained on large datasets of emotional cues. These cues may include:

-

Changes in vocal tone or pitch

-

Subtle facial muscle movements

-

Linguistic sentiment indicators

-

Behavioral signals present in multimodal communication

The platform typically functions as an API-based system, meaning developers can integrate its capabilities into applications such as conversational agents, video analysis systems, digital therapy tools, or customer interaction platforms.

Unlike traditional natural language processing tools that focus only on textual meaning, Hume AI attempts to analyze how something is said, not just what is said. This distinction places it within a growing ecosystem of technologies designed to interpret emotional context in digital interactions.

Key Features Explained

Emotion-recognition platforms often include several layers of analysis, and Hume AI incorporates multiple technical components intended to capture different types of expressive signals.

Voice-Based Emotion Analysis

One core capability of Hume AI involves analyzing acoustic characteristics of speech. Instead of evaluating only the words spoken, the system examines vocal elements such as:

-

Tone and pitch variations

-

Speech rhythm and pacing

-

Volume changes

-

Vocal tension or softness

These acoustic signals can reveal patterns commonly associated with emotional states like excitement, frustration, or calmness. The system processes audio inputs through machine learning models trained on datasets containing annotated emotional expressions.

Voice-based emotion analysis has applications in areas such as conversational AI research, communication studies, and human–computer interaction design.

Facial Expression Recognition

Hume AI also analyzes facial movements captured in images or video. Facial recognition in this context does not aim to identify a person’s identity but instead focuses on micro-expressions and muscle movements.

Typical features analyzed include:

-

Eyebrow position and movement

-

Eye openness or narrowing

-

Lip tension and curvature

-

Jaw positioning

By examining combinations of these features, the system estimates emotional expressions associated with facial behavior. These insights can be used in video analysis systems, research environments, and human interaction studies.

Multimodal Emotion Modeling

Human communication rarely relies on a single channel. People often express emotions through voice, facial expressions, and language simultaneously. Hume AI attempts to combine signals from multiple sources into a unified analysis model.

Multimodal analysis may involve processing:

-

Audio recordings

-

Video footage

-

Written or spoken language

Combining these signals can provide more context than analyzing a single channel alone. For example, a sentence that appears neutral in text may carry emotional meaning when tone of voice is included.

Text and Language Emotion Detection

In addition to audio and visual data, Hume AI can analyze emotional signals within written language. This involves examining word choice, sentence structure, and contextual cues to estimate emotional tone.

Language-based emotion analysis differs from traditional sentiment analysis. Sentiment systems often categorize text as positive, negative, or neutral. Emotion recognition tools attempt to identify a broader set of emotional patterns, such as curiosity, disappointment, or enthusiasm.

API-Based Integration

Hume AI is typically accessed through developer interfaces that allow integration into existing applications. This architecture allows researchers and software developers to send input data—such as audio clips or text—and receive structured emotional analysis results.

API-based platforms are commonly used in AI development because they allow modular integration without requiring organizations to build emotion-recognition systems from scratch.

Common Use Cases

Emotion recognition technologies like Hume AI are primarily used in research, development, and experimental environments. Several categories of applications have emerged in recent years.

Human–Computer Interaction Research

Researchers studying human interaction with technology often use emotion recognition tools to evaluate how people respond to digital systems. By analyzing vocal or facial responses, researchers can observe patterns that may indicate confusion, satisfaction, or frustration.

These insights can inform the design of more intuitive interfaces.

Conversational AI Development

Voice assistants and chatbots are increasingly expected to handle complex human interactions. Emotion analysis systems can provide contextual signals that help developers understand how users respond emotionally to automated conversations.

Although emotion recognition does not fully replicate human empathy, it can assist in identifying patterns of communication.

Customer Interaction Analysis

Organizations studying customer service interactions sometimes analyze call recordings or chat logs to understand emotional responses. Emotion detection tools may help identify moments where conversations become tense or where users express satisfaction.

This use case generally appears in analytical or research contexts rather than real-time decision-making.

Digital Health and Wellbeing Research

Some academic and experimental projects use emotion analysis technologies to study patterns in speech or facial behavior that may correlate with emotional wellbeing.

Researchers exploring mental health technologies occasionally analyze expressive signals from speech or video to understand behavioral patterns over time. Such research typically requires careful ethical oversight and data privacy considerations.

Media and Behavioral Studies

Emotion recognition tools are sometimes used to analyze audience reactions to media content. Researchers may study facial responses or vocal reactions during film viewing, gaming experiences, or interactive storytelling.

This allows analysts to explore how audiences emotionally respond to different types of content.

Potential Advantages

Emotion recognition platforms offer several potential benefits when used responsibly in research and development settings.

Expanded Context in Communication Analysis

Traditional data analysis often focuses on explicit content, such as written text or numerical data. Emotion recognition tools add another layer by examining expressive signals that convey emotional context.

This may provide deeper insights into human communication patterns.

Multimodal Data Processing

Systems capable of analyzing voice, facial expressions, and language together may offer more comprehensive analysis compared to single-channel approaches.

Multimodal models are often better suited to complex real-world interactions.

Research Applications in Behavioral Science

Emotion recognition tools create opportunities for interdisciplinary research involving psychology, linguistics, and computer science. These technologies allow researchers to study emotional expression patterns in large datasets.

Development Support for Interactive Systems

Developers building conversational interfaces or interactive environments may use emotion analysis to evaluate how users respond during testing phases.

Such insights can contribute to iterative design processes.

Limitations & Considerations

Despite growing interest in emotion-aware AI, several technical and ethical limitations remain.

Accuracy Challenges

Human emotions are complex and culturally influenced. Facial expressions and vocal patterns do not always correspond directly to specific emotional states. As a result, emotion recognition systems may produce uncertain or context-dependent interpretations.

Accuracy can vary depending on factors such as lighting conditions, audio quality, cultural variation, and individual expression styles.

Privacy and Data Sensitivity

Analyzing emotional signals often involves processing audio recordings, video footage, or biometric information. These forms of data may raise privacy concerns if not handled carefully.

Organizations working with emotion-recognition technologies typically need to implement strong data protection and consent frameworks.

Ethical Concerns

The interpretation of emotional states by machines can raise ethical questions about surveillance, workplace monitoring, or psychological profiling. Researchers and policymakers continue to debate appropriate guidelines for the use of affective computing systems.

Cultural Interpretation Variability

Emotional expressions can vary significantly across cultures and individuals. A gesture or vocal tone interpreted one way in one cultural context may carry a different meaning elsewhere.

Training datasets and models must account for such variation to avoid biased interpretations.

Who Should Consider Hume AI

Hume AI may be relevant for individuals and organizations engaged in research or experimental development involving human communication.

Examples include:

-

Academic researchers studying emotion recognition or behavioral analysis

-

Developers building experimental conversational AI systems

-

Human–computer interaction researchers

-

Media analytics researchers studying audience reactions

-

Data scientists exploring multimodal machine learning models

These groups often require tools capable of processing expressive signals in large datasets.

Who May Want to Avoid It

Certain users may find limited relevance in emotion recognition platforms.

These may include:

-

Individuals seeking general-purpose AI tools

-

Small projects without access to audio or video data

-

Organizations without clear ethical or privacy frameworks for biometric analysis

-

Applications where emotional interpretation could introduce risk or ambiguity

Emotion recognition technology is most useful when applied in controlled research or development environments with appropriate oversight.

Comparison With Similar Tools

Hume AI exists within a broader ecosystem of affective computing technologies. Several other platforms and research tools also focus on emotion recognition.

Some systems specialize exclusively in facial expression analysis, while others concentrate on speech emotion detection. Certain research frameworks provide open-source models designed for academic experimentation, whereas commercial platforms often offer structured APIs and developer tools.

Compared with single-channel solutions, platforms like Hume AI emphasize multimodal emotion analysis, combining voice, text, and visual inputs. This approach attempts to provide a broader understanding of expressive behavior.

However, many tools in this space share similar challenges, including accuracy limitations and the need for carefully curated datasets. As the field evolves, researchers continue to evaluate how different models interpret emotional signals across diverse populations and contexts.

Final Educational Summary

Emotion recognition technologies represent a growing area of artificial intelligence research that attempts to bridge the gap between human communication and machine interpretation. By analyzing vocal tone, facial expressions, and language patterns, these systems attempt to identify emotional cues embedded within human interactions.

Hume AI is one example of a platform designed to explore this capability through machine learning models focused on expressive behavior. Its functionality includes voice emotion detection, facial expression analysis, text-based emotional interpretation, and multimodal signal processing.

While such technologies offer potential benefits in research, behavioral studies, and interactive system design, they also present significant challenges. Issues related to privacy, ethical use, cultural interpretation, and model accuracy remain important considerations.

As affective computing continues to develop, tools like Hume AI contribute to broader discussions about how artificial intelligence systems can interpret—not replicate—human emotional expression within digital environments.

Disclosure: This article is for educational and informational purposes only. Some links on this website may be affiliate links, but this does not influence our editorial content or evaluations.