Introduction

The demand for digital voice content has expanded rapidly across media, education, software applications, and assistive technologies. Producing spoken content traditionally requires recording equipment, trained voice talent, and editing workflows. While early text-to-speech systems reduced production effort, they often lacked emotional realism and natural rhythm.

ElevenLabs develops AI models designed to generate highly realistic synthetic speech and replicate human voices digitally. Its technology focuses on expressive delivery rather than purely functional narration.

What Is ElevenLabs

ElevenLabs is a cloud-based artificial intelligence platform that generates spoken audio from text inputs. Beyond standard text-to-speech, it enables digital voice replication and language adaptation.

The platform combines machine learning voice modeling with scalable cloud processing. It is accessible through a browser interface and developer APIs.

Compare Speech Synthesis Platforms

Core offerings include:

-

AI-generated speech synthesis

-

Voice replication technology

-

Audio-to-audio voice transformation

-

Multilingual speech adaptation

-

Integration tools for software systems

Key Features Explained

AI Speech Generation

Users can input written scripts and receive downloadable audio files. The system attempts to model human-like pacing, tonal shifts, and emphasis patterns.

Settings allow modification of voice consistency and expressive variation, depending on the desired output style.

Digital Voice Replication

The system can create a synthetic version of a recorded voice. Short samples produce quick approximations, while extended recordings improve accuracy and tonal depth.

Voice replication is typically used for brand continuity, accessibility preservation, or fictional character development.

Speech Transformation

Audio recordings can be converted into another digital voice while maintaining original timing. This may support creative storytelling or localization workflows.

Language Expansion Tools

The platform supports multiple languages and offers translation-based voice generation. The goal is to keep emotional tone aligned with the original speech.

Voice Collection Library

A built-in voice catalog provides access to various accents, styles, and tones. Users who do not require custom cloning can select pre-designed voices.

Developer Access

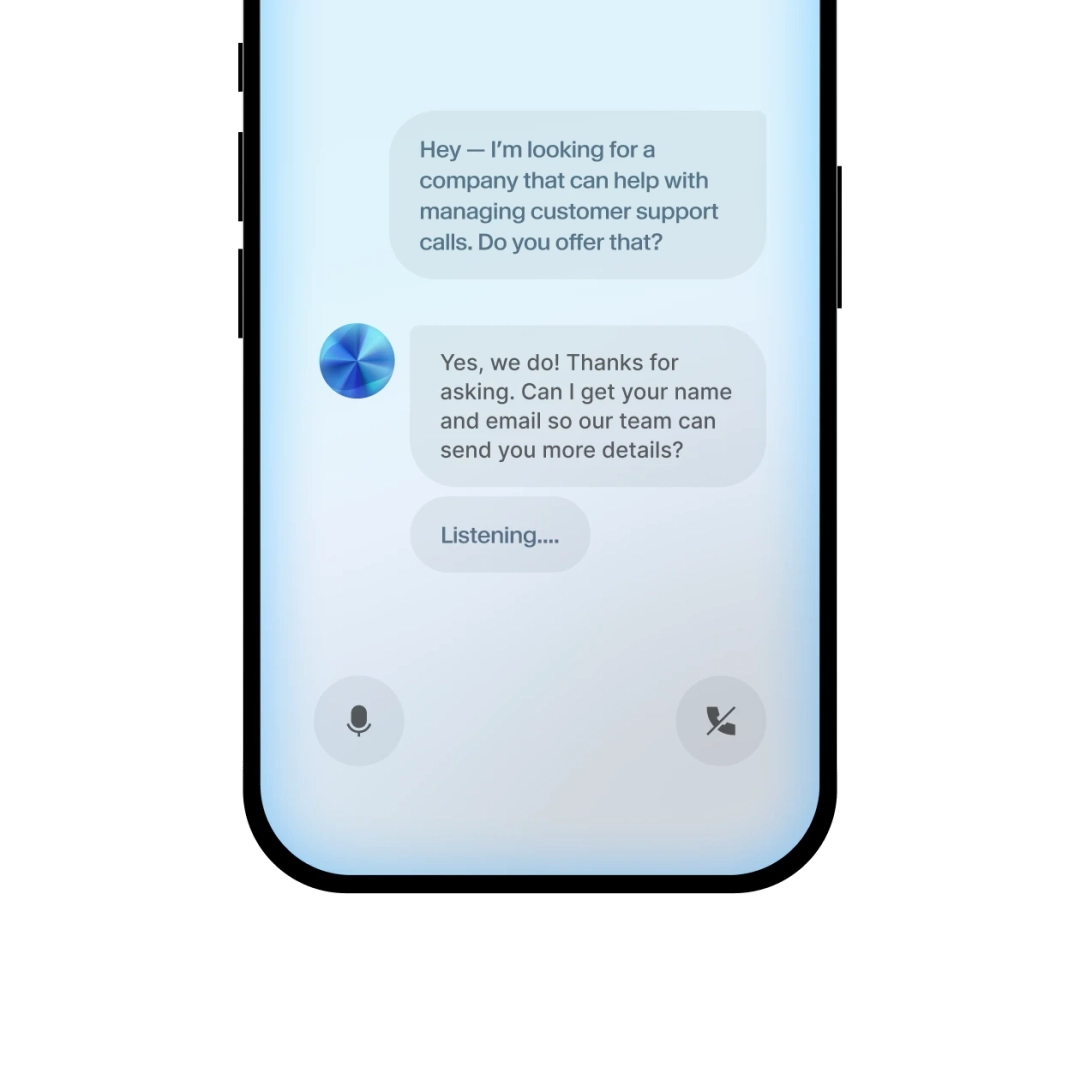

Through API endpoints, developers can integrate AI speech into applications such as chat interfaces, training software, interactive systems, or digital assistants.

Common Use Cases

Media Production

Video producers and educators may generate voice narration without organizing recording sessions.

Audiobook Drafting

Writers can create spoken drafts of manuscripts for early listening tests or distribution experiments.

Software & App Development

Developers may embed real-time AI voices in customer support tools, games, or learning platforms.

Accessibility Technology

Voice replication may help individuals maintain a version of their natural speech for assistive communication.

Internal Business Training

Companies can automate instructional content narration across departments.

Potential Advantages

Expressive Output

The system attempts to move beyond monotone AI narration by simulating emotional variation.

Reduced Production Workflow

Audio can be generated instantly without physical recording infrastructure.

Language Coverage

Support for multiple languages enables content scaling across regions.

Integration Flexibility

API availability allows adaptation within custom-built systems.

Usage-Based Scaling

Plans vary depending on how much voice content is generated.

Limitations & Considerations

Scaling Costs

High-output environments may experience increased subscription costs.

Pronunciation Adjustments

Specialized terminology may require manual corrections.

Ethical Responsibility

Voice cloning requires appropriate consent and authorization. Unauthorized replication may present legal risks.

Internet Dependency

As a cloud service, offline voice generation is not supported.

Creative Performance Boundaries

Although expressive, AI-generated speech may not fully replicate the nuance of professional voice acting in emotionally complex scenarios.

Who Should Consider ElevenLabs

-

Digital creators producing frequent narration

-

Technology teams integrating AI audio

-

Multilingual media producers

-

Accessibility-focused organizations

-

Startups testing conversational interfaces

Who May Want to Avoid

-

Projects requiring offline-only tools

-

Highly regulated environments with strict data policies

-

Productions needing theatrical-level emotional performance

-

Extremely low-volume users who prefer free-only solutions

Comparison With Similar Platforms

AI speech generation is a growing category with several competitors:

-

Play.ht

-

WellSaid Labs

-

Murf AI

While many tools provide synthetic speech, differences typically appear in voice realism, cloning accuracy, enterprise controls, and pricing structure. Evaluation should be based on project scale, integration requirements, and governance policies.

Final Educational Summary

ElevenLabs provides AI-based voice synthesis and digital voice replication through a cloud platform. Its focus lies in producing expressive and adaptable speech rather than simple mechanical narration.

The system can reduce time required for producing audio content and enable multilingual expansion. However, users should carefully assess cost scalability, data considerations, and ethical implications related to voice cloning.

Adoption decisions should align with project objectives, compliance requirements, and long-term production strategy.

Disclosure

This review is independently prepared for educational analysis. It is not sponsored content and does not promote or recommend purchasing decisions.